Creating an OpenVINO Classifier Capsule¶

Introduction¶

This tutorial will guide you through encapsulating an OpenVINO classifier model. For this tutorial, we will be using the vehicle-attributes-recognition-barrier-0039 model from the Open Model Zoo, but the concepts shown here apply to all OpenVINO classifiers. You can find the complete capsule on the Capsule Zoo. This model is able to classify the color of a detected vehicle.

This capsule will rely on the detector created in the previous tutorial to find vehicles in the video frame before they can be classified.

Getting Started¶

Like in the previous tutorial, we will create a new directory for the classifier

capsule. This time we will name it classifier_vehicle_color_openvino. We will

also add a meta.conf with the same contents, declaring that our capsule

relies on version 0.2 or higher of OpenVisionCapsules.

[about]

api_compatibility_version = 0.3

We will also add the weights and model files to this directory so that they can be loaded by the capsule.

The Capsule Class¶

The Capsule class defined here will be very similar in structure to the one in the detector capsule.

from vcap import BaseCapsule, NodeDescription, DeviceMapper

from .backend import Backend

from . import config

class Capsule(BaseCapsule):

name = "classifier_vehicle_color_openvino"

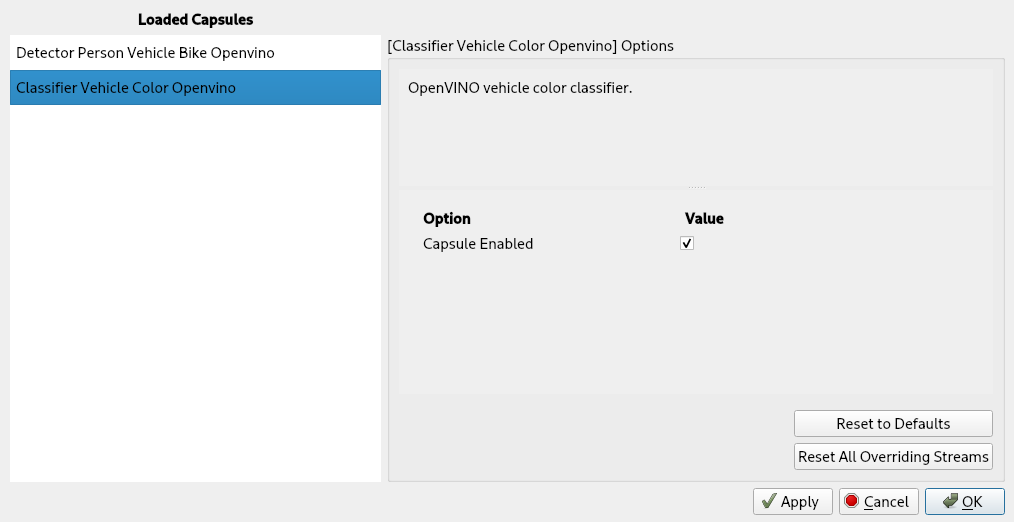

description = "OpenVINO vehicle color classifier."

version = 1

device_mapper = DeviceMapper.map_to_openvino_devices()

input_type = NodeDescription(

size=NodeDescription.Size.SINGLE,

detections=["vehicle"])

output_type = NodeDescription(

size=NodeDescription.Size.SINGLE,

detections=["vehicle"],

attributes={"color": config.colors})

backend_loader = lambda capsule_files, device: Backend(

model_xml=capsule_files[

"vehicle-attributes-recognition-barrier-0039.xml"],

weights_bin=capsule_files[

"vehicle-attributes-recognition-barrier-0039.bin"],

device_name=device

)

Let's take a look at some of the differences between this Capsule class and the detector's.

input_type = NodeDescription(

size=NodeDescription.Size.SINGLE,

detections=["vehicle"])

This capsule takes vehicle detections produced by the detector capsule as input. Each vehicle found in the video frame is processed one at a time.

output_type = NodeDescription(

size=NodeDescription.Size.SINGLE,

detections=["vehicle"],

attributes={"color": config.colors})

This capsule provides a vehicle detection with a color attribute as output. Note

that classifier capsules do not create new detections. Instead, they augment the

detections provided to them by other capsules. We've moved the list of colors

out into a separate config.py file so that it can also be referenced by the

backend, which we will define in the next section.

# config.py

colors = ["white", "gray", "yellow", "red", "green", "blue", "black"]

You may have noticed that this capsule does not have any options. The options

field can be omitted when the capsule doesn't have any parameters that can be

modified at runtime.

The Backend Class¶

We will once again create a file called backend.py where the Backend class

will be defined. It will still subclass BaseOpenVINOBackend and we will only

need to implement the process_frame method.

from collections import namedtuple

from typing import Dict

import numpy as np

from vcap import (

Resize,

DETECTION_NODE_TYPE,

OPTION_TYPE,

BaseStreamState)

from vcap_utils import BaseOpenVINOBackend

from . import config

class Backend(BaseOpenVINOBackend):

def process_frame(self, frame: np.ndarray,

detection_node: DETECTION_NODE_TYPE,

options: Dict[str, OPTION_TYPE],

state: BaseStreamState) -> DETECTION_NODE_TYPE:

crop = Resize(frame).crop_bbox(detection_node.bbox).frame

input_dict, _ = self.prepare_inputs(crop)

prediction = self.send_to_batch(input_dict).result()

max_color = config.colors[prediction["color"].argmax()]

detection_node.attributes["color"] = max_color

Let's review this method line-by-line.

crop = Resize(frame).crop_bbox(detection_node.bbox).frame

Capsules always receive the entire video frame, so we need to start by cropping the frame to the detected vehicle.

input_dict, _ = self.prepare_inputs(crop)

We then prepare the cropped video frame to be fed into the model. The video frame is resized to fit into the model and formatted in the way the model expects. We can ignore the second return value, the resize object, because classifiers don't provide any coordinates that need adjusting.

prediction = self.send_to_batch(input_dict).result()

Next, the input data is sent into the model for batch processing. The call to

get causes the backend to block until the result is ready. The results

are objects with raw OpenVINO prediction information.

max_color = config.colors[prediction["color"].argmax()]

We then pull the color information from the prediction, and choose the color

with the highest confidence. We then convert the color from its integer

representation to a human-readable string using the colors list defined in

config.py.

detection_node.attributes["color"] = max_color

Finally, we augment the vehicle detection with the new "color" attribute. This capsule does not need to return anything because no new detections have been created.

Wrapping Up¶

Finally, the capsule is complete! Your data directory should look something like this:

your_data_directory

├── volumes

└── capsules

└── classifier_vehicle_color_openvino

├── backend.py

├── capsule.py

├── config.py

├── meta.conf

├── vehicle-attributes-recognition-barrier-0039.bin

└── vehicle-attributes-recognition-barrier-0039.xml

When you restart BrainFrame, your capsule will be packaged into a .cap file

and initialized. You'll see its information on the BrainFrame client.

Load up a video stream to see classification results.